Introduction

There are so programming situations where there are multiple methods of achieving the same result. Even for something as simple as summing the values of a list of integers, there are at least 5 different methods in Eiffel. Often times we don't go to the trouble of finding out which method is the most efficient and by how much? Creating benchmarks is a chore.

To make benchmark creation and organization less of a chore I created a class in Eiffel-Loop that will allow you quickly compare arbitrary number of routines using an interactive command shell.

Class EL_BENCHMARK_COMMAND_SHELL

The class EL_BENCHMARK_COMMAND_SHELL is an example with a selection of 2 benchmarks:

- Compares 5 different methods of summing an arrayed list of integers

- Compares 3 methods of concatenating an array of strings together

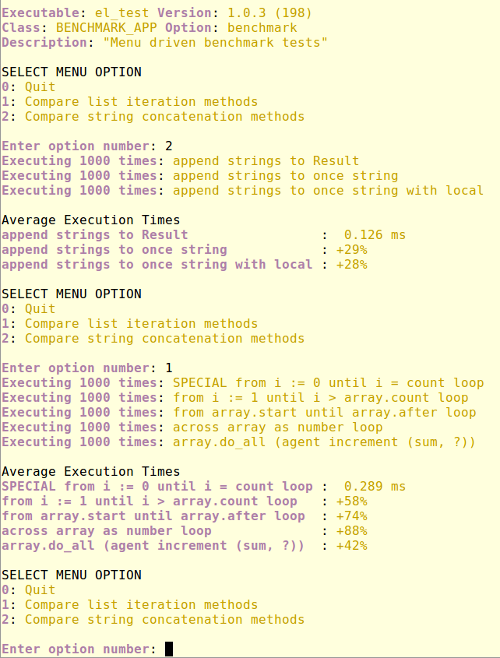

By calling the routine 'execute' the shell enters a command loop by printing a menu of all benchmarks itemized in the 'new_command_table list. Entering a number selects a benchmark to execute. Entering '0' quits the command loop.

The 'make initialization routine takes one argument 'number_of_runs specifying the number of times to run each benchmark routine. The output figures are based on the average execution time.

The routine {EL_BENCHMARK_COMMAND_SHELL}.compare_benchmarks, benchmarks each routine and prints the average execution time of the fastest. The other routine benchmarks figures are displayed as relative to this figure using a '+' or '-' percentage.

class

BENCHMARK_COMMAND_SHELL

inherit

EL_BENCHMARK_COMMAND_SHELL

export

{EL_COMMAND_LINE_SUB_APPLICATION} make

end

create

default_create, make

feature -- Benchmarks

compare_list_iteration_methods

local

actions: like Type_actions; array: ARRAYED_LIST [INTEGER]

i: INTEGER; sum: INTEGER_REF

do

log.enter ("compare_list_iteration_methods")

create array.make_filled (10000)

create sum

create actions.make (<<

["SPECIAL from i := 0 until i = count loop", agent iterate_special_from_i_until_i_eq_count (array.area, sum)],

["from i := 1 until i > array.count loop", agent iterate_from_i_until_i_gt_count (array, sum)],

["from array.start until array.after loop", agent iterate_start_after_forth (array, sum)],

["across array as number loop", agent iterate_across_array_as_n (array, sum)],

["array.do_all (agent increment (sum, ?))", agent iterate_do_all (array, sum)]

>>)

compare_benchmarks (actions)

log.exit

end

compare_string_concatenation

local

actions: like Type_actions; array: ARRAYED_LIST [STRING]

do

log.enter ("compare_list_iteration_methods")

create array.make ((('z').natural_32_code - ('A').natural_32_code + 1).to_integer_32)

from until array.full loop

array.extend (create {STRING}.make_filled (('A').plus (array.count), 100))

end

create actions.make (<<

["append strings to Result", agent string_append (array)],

["append strings to once string", agent string_append_once_string (array)],

["append strings to once string with local", agent string_append_once_string_with_local (array)]

>>)

compare_benchmarks (actions)

log.exit

end

feature {NONE} -- Iteration variations

iterate_across_array_as_n (array: ARRAYED_LIST [INTEGER]; sum: INTEGER_REF)

do

sum.set_item (0)

across array as number loop

sum.set_item (sum.item + number.item)

end

end

iterate_do_all (array: ARRAYED_LIST [INTEGER]; sum: INTEGER_REF)

do

sum.set_item (0)

array.do_all (agent increment (sum, ?))

end

iterate_from_i_until_i_gt_count (array: ARRAYED_LIST [INTEGER]; sum: INTEGER_REF)

local

i: INTEGER

do

sum.set_item (0)

from i := 1 until i > array.count loop

sum.set_item (sum.item + array [i])

i := i + 1

end

end

iterate_special_from_i_until_i_eq_count (array: SPECIAL [INTEGER]; sum: INTEGER_REF)

local

i, count: INTEGER

do

sum.set_item (0); count := array.count

from i := 0 until i = count loop

sum.set_item (sum.item + array [i])

i := i + 1

end

end

iterate_start_after_forth (array: ARRAYED_LIST [INTEGER]; sum: INTEGER_REF)

do

sum.set_item (0)

from array.start until array.after loop

sum.set_item (sum.item + array.item)

array.forth

end

end

feature {NONE} -- String append variations

string_append (array: ARRAYED_LIST [STRING]): STRING

do

create Result.make_empty

from array.start until array.after loop

Result.append (array.item)

array.forth

end

Result.trim

end

string_append_once_string_with_local (array: ARRAYED_LIST [STRING]): STRING

local

l_result: STRING

do

l_result := Once_string

l_result.wipe_out

from array.start until array.after loop

l_result.append (array.item)

array.forth

end

Result := l_result.twin

end

string_append_once_string (array: ARRAYED_LIST [STRING]): STRING

do

Once_string.wipe_out

from array.start until array.after loop

Once_string.append (array.item)

array.forth

end

Result := Once_string.twin

end

feature {NONE} -- Implementation

increment (a_sum: INTEGER_REF; n: INTEGER)

do

a_sum.set_item (a_sum.item + n)

end

feature {NONE} -- Factory

new_command_table: like command_table

do

create Result.make (<<

["Compare list iteration methods", agent compare_list_iteration_methods],

["Compare string concatenation methods", agent compare_string_concatenation]

>>)

end

feature {NONE} -- Constants

Once_string: STRING

once

create Result.make_empty

end

end

Console Output

Output of finalized executable

Github Source Links

class BENCHMARK_APP

class BENCHMARK_COMMAND_SHELL

class EL_BENCHMARK_COMMAND_SHELL

class EL_BENCHMARK_ROUTINES

Points to remember when doing benchmarks

There are a few points that may affect the result and therefore are important to keep in mind when doing benchmarks:

<li>Finalized and workbench code behave quite differently. If the goal is to generate a finalized executable, use finalization for benchmarks. <li>One of the most efficient optimization options is inlining. It causes a slightly bigger executable, but its performance is usually better. <li>Use other optimization options, such as array optimization to get better results. <li>Some options prevent some optimizations. E.g., requesting for a trace on an exception or enabling assertions in finalized mode will give slower code. <li>Compilers and libraries do change: results obtained for one release may be different from the results obtained for another release. <li>Benchmarks need to be realistic, because modern compilers may remove some code if it has no effect. <li>Generated code depends on operational conditions. E.g., the same code for single-threaded and SCOOP programs may suffer from different run-time overhead. <li>Benchmark need to be as close to the real program as possible. UsingINTEGER instead of a reference type in a container or replacing a polymorphic feature call with a non-polymorphic one can create wrong impression about the expected behavior.

In the original example above a value of every array item is assigned to a local variable that is not used. With sufficient inlining size some C compilers will figure out that the loop has no effect and eliminate it altogether.

PS. One more way to iterate over all the elements of an array is to calldo_all on the container and to pass an agent that does the work for each item.

Points to remember

Hi Alexander,

thanks for contributing this list of tips.

I changed my example to sum the integers in case the compiler optimized away the local assignment.

I also updated my article to include your 'do_all' suggestion. Surprisingly it turned out to be the fastest method. But it made sense when I looked at the source code and found it is calling a {SPECIAL}.do_all_in_bounds on area_v2. This gave me an idea to include a 5th method that operates directly on area_v2.

regards

Finnian

Comparison to EiffelStudio 16.05

EiffelStudio 16.05 introduced some optimizations to cursor classes and code generation, so you may want to redo the benchmarks and compare them to 15.12 to see if there are any improvements.